SWE-agent

SWE-agent is an open-source CLI autonomous coding agent from Princeton and Stanford that uses a custom Agent-Computer Interface to let LLMs fix GitHub issues and complete coding tasks autonomously.

SWE-agent: A Cursor Alternative for Open-Source Research-Grade Autonomous Bug Fixing

SWE-agent is an open-source autonomous CLI coding agent built by researchers at Princeton University and Stanford University. It uses a custom Agent-Computer Interface (ACI) to let large language models autonomously read code, plan fixes, write implementations, and run tests against real GitHub issues. As a Cursor alternative, it targets researchers, open-source contributors, and advanced developers who want a fully transparent, modifiable AI agent for automated software engineering tasks without vendor lock-in.

SWE-agent vs. Cursor: Quick Comparison

| SWE-agent | Cursor | |

|---|---|---|

| Type | Open-source CLI autonomous agent | Standalone IDE (VS Code fork) |

| Pricing | Free (open source) — pay only for LLM API calls | Free / $20 / $40 per month |

| LLM choice | GPT-4o, Claude, Gemini, local models | Built-in models + own key |

| Offline / local models | Yes (via Ollama or compatible providers) | No |

| Open source | Yes (MIT license) | No |

| Codebase indexing | File-level navigation via ACI tools | Yes (automatic) |

| Multi-file edits | Yes | Yes |

Key Strengths

- Fully open source with MIT license: SWE-agent is entirely open source under a permissive license. Every component—the agent framework, the ACI interface, the run harness, evaluation scripts—is available for inspection, modification, and redistribution. This level of transparency is the opposite of Cursor's closed proprietary stack, making SWE-agent the go-to choice for researchers, auditors, or developers who need to understand and modify every layer of their AI coding toolchain.

- Model-agnostic design: SWE-agent works with any LLM provider that offers an API: OpenAI (GPT-4o, GPT-4), Anthropic (Claude 3.5 Sonnet, Claude 3 Opus), Google (Gemini), and even local models via Ollama. This makes it genuinely provider-neutral—you choose the best model for your task and budget, and SWE-agent adapts. No vendor lock-in, no forced upgrades to a new proprietary model tier.

- State-of-the-art autonomous bug resolution: SWE-agent was benchmarked on SWE-bench, the standard academic benchmark for AI software engineering, and achieved state-of-the-art results at the time of release. The ACI—a custom interface that gives the LLM structured tools to navigate files, view code, run commands, and edit files—is specifically designed to maximize the LLM's ability to work autonomously on real repository-scale problems.

- Zero per-seat cost — pay only for LLM API calls: Unlike Cursor ($20–40/month per seat) or other commercial tools, SWE-agent itself costs nothing. Teams only pay for LLM API calls, which can be optimized by choosing cost-efficient models or routing tasks to cheaper providers. For high-volume automated bug triage in CI/CD pipelines, this economics model is dramatically more favorable.

Known Weaknesses

- No graphical interface: SWE-agent is CLI-only. There is no GUI, no IDE integration, and no visual diff viewer. Developers who want to review AI changes inline within their editor will need to use separate tooling to inspect and apply SWE-agent's output. This adds friction for interactive use compared to Cursor's integrated experience.

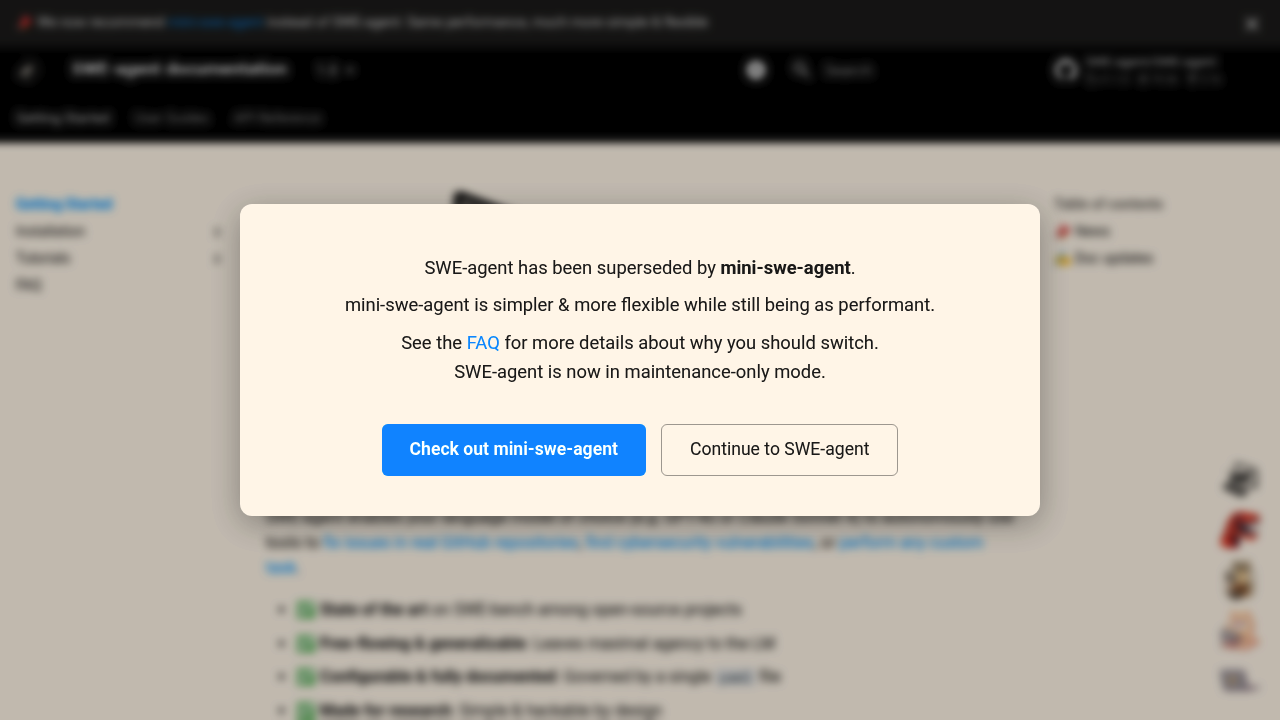

- Primarily research-oriented, not developer-experience-optimized: SWE-agent was created as a research tool to advance the science of AI software engineering. It is actively evolving (the team is building mini-swe-agent as a successor), which means the API and usage patterns can change between versions. Production teams building workflows on top of SWE-agent need to account for this instability.

- Requires LLM provider API keys: Unlike self-contained tools, SWE-agent requires you to supply your own API keys for LLM providers. Setting up accounts, managing keys, and understanding token-based billing adds setup overhead that Cursor's all-in-one subscription model avoids.

Best For

SWE-agent is best suited for researchers studying AI software engineering, open-source contributors who want to automate GitHub issue triage and resolution, and advanced developers building automated testing or bug-fixing pipelines who need full transparency into the agent's behavior. It's also ideal for teams that want to run AI-assisted bug fixing in CI/CD pipelines where per-seat subscription costs would be prohibitive, and for anyone who wants to experiment with or extend an AI coding agent at the framework level.

Pricing

- SWE-agent itself: Free (open source, MIT license) — install via pip

- LLM API costs (examples):

- OpenAI GPT-4o: ~$5 input / $15 output per million tokens

- Anthropic Claude 3.5 Sonnet: ~$3 input / $15 output per million tokens

- Local models via Ollama: Free (self-hosted compute only)

LLM API prices are subject to change. Check each provider's official pricing page for current details. See SWE-agent documentation for supported models.

Technical Details

- Models supported: GPT-4o, GPT-4, Claude 3.5 Sonnet, Claude 3 Opus, Gemini, local models via Ollama

- Context window: Depends on chosen LLM provider

- IDE / platform: CLI (command line), no GUI or IDE extension

- Offline / local models: Yes — via Ollama or compatible local inference servers

- Codebase indexing: File-level navigation using custom ACI tools (view, search, edit, run)

- API access: N/A — SWE-agent is a CLI tool, not a service

- Open source: Yes — MIT license, fully open source on GitHub

How It Compares to Cursor

Cursor is a commercial AI IDE designed for real-time developer productivity—it integrates AI into the coding flow with a polished GUI and subscription-based access. SWE-agent is a research-grade open-source agent designed for autonomous end-to-end software engineering tasks, particularly resolving GitHub issues without human involvement during execution. Cursor is better when you want an AI co-pilot while actively writing code; SWE-agent is better when you want to fully automate issue resolution, run experiments on AI agent behavior, or build automated software engineering pipelines.

Conclusion

SWE-agent is the right choice over Cursor for developers and researchers who prioritize full transparency, model flexibility, and zero per-seat cost over polished UX. If you're building automated bug-fixing pipelines, studying AI agent behavior, or simply want a fully open-source coding agent you can inspect, modify, and run with any LLM—SWE-agent delivers capabilities that no commercial tool, including Cursor, can match at its price point of free.

Sources

FAQ

Is SWE-agent free?

Yes. SWE-agent itself is completely free and open source under the MIT license. You only pay for the LLM API calls made during runs (to OpenAI, Anthropic, etc.), or use free local models via Ollama. There are no per-seat fees, subscription tiers, or licensing costs for SWE-agent itself.

Does SWE-agent work with VS Code?

SWE-agent does not have a VS Code extension. It is a command-line tool only. You run it from your terminal, and it outputs file changes that you can then review and commit using any editor or IDE you prefer, including VS Code.

How does SWE-agent compare to Cursor?

Cursor is a commercial AI IDE that assists you in real time as you write code. SWE-agent is an open-source CLI agent that autonomously resolves GitHub issues from start to finish without human intervention during the run. Cursor is better for interactive daily coding; SWE-agent is better for automated, batch, or research-oriented software engineering tasks.

Which LLM models work best with SWE-agent?

Based on published SWE-bench results, GPT-4o and Claude 3.5 Sonnet deliver the strongest performance. For cost-efficiency, GPT-4o mini or smaller Claude models work for simpler tasks. Local models via Ollama (Qwen2.5-Coder, DeepSeek-Coder) are free but typically less capable on complex repository-scale tasks than frontier models.

Reviews

No reviews yetSimilar alternatives in category

Claude Code

Terminal-first AI coding assistant for autonomous development tasks.

Aider — Cursor alternative

Aider is a leading open-source AI pair programming tool that allows you to edit code in your local git repository directly from the terminal or through various community GUIs.

Plandex

Open-source terminal-based AI coding agent for complex multi-file development tasks.